Why does deep learning work so well?

That question has a surprising answer. Not a computer science answer. A physics answer.

This video is worth watching: Deep Learning's Bizarre Connection to How Modern Physics Works

Modern physics has a technique called the renormalization group. The basic idea: when you want to understand a complex physical system, you do not need all the fine-grained details. You can "integrate out" the small-scale noise and find that the large-scale behavior is governed by a much simpler description. Reality organizes itself hierarchically. Each level of scale has its own governing structure. The details at one level become irrelevant at the next.

This is not a metaphor for what deep neural networks do. It is mathematically the same operation.

A neural network processes an image and extracts edges in the first layer. The second layer finds shapes from those edges. The third finds objects from those shapes. Each layer integrates out the fine-grained information and extracts the structure that governs the next level up. That is the renormalization group running in silicon.

Researchers showed this formally. The mathematics that physicists use to understand phase transitions, particle interactions, and large-scale structure in the universe is structurally equivalent to the mathematics of deep learning. This was not engineered in. It was discovered.

The deeper claim is more important.

Deep learning does not just accidentally resemble physics. It works as well as it does because the universe itself has mathematical structure that makes learning possible. Reality is not arbitrary. It is hierarchical, symmetric, sparse, and local. Those properties mean that the data we observe from reality is compressible. And compressibility is what neural networks exploit.

Lin and Tegmark's paper "Why Does Deep and Cheap Learning Work So Well?" puts this directly: neural networks work because the physical universe has properties — symmetry, locality, hierarchy — that make it amenable to hierarchical compression. The universe is structured in a way that makes it learnable.

That is a profound statement. It means the effectiveness of AI is not primarily a computer science achievement. It is a consequence of the mathematical structure of reality.

This connects the threads.

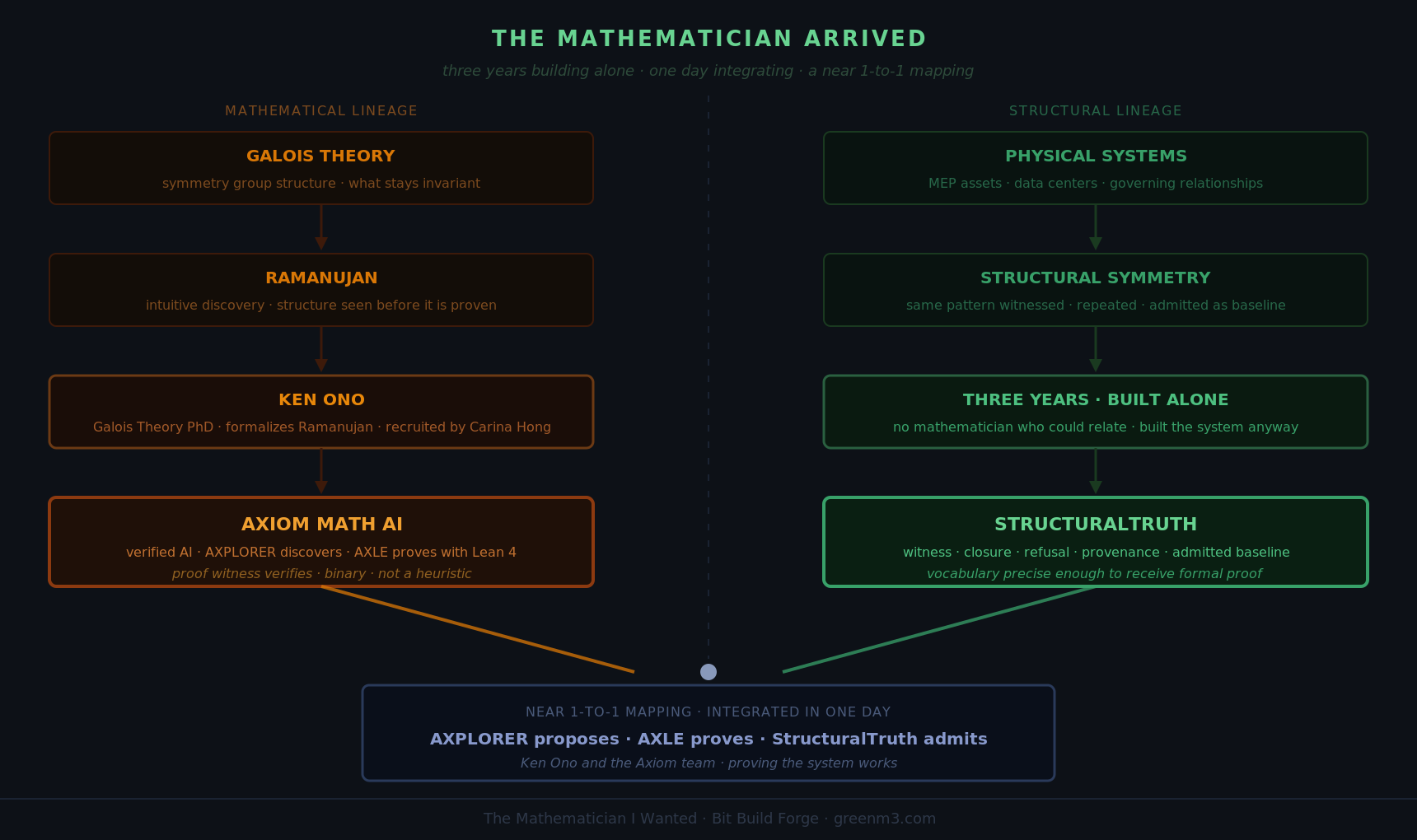

Galois Theory asks what stays invariant under transformation — what symmetries govern the structure of a system. The renormalization group asks the same question at different scales — what is preserved as you move from fine to coarse. Deep learning asks it implicitly at every layer — what structure survives the compression?

They are all versions of the same question: what is the mathematical shape of what is actually there?

AI is not imitating intelligence. It is not mimicking thought. It is executing the mathematics that describes how reality is organized — layer by layer, scale by scale, from noise to structure to meaning.

The GPU is the instrument. The mathematics is the physics. The learning is the discovery.

Deep Learning's Bizarre Connection to How Modern Physics Works

— Dave / greenm3